The Hague Summit for

Accountability

in the

Digital Age

November 6-7th 2019 – Peace Palace – The Hague

dasasfsfa

The Hague Summit 2019

The Hague Summit will focus on safeguarding the role of the internet as a tool for personal, professional, and social engagement. I4ADA is taking concrete steps to increase access to knowledge, evidence-based trust and measures to foster accountability. The goals are to facilitate transparency, a common understanding, and thus to promote a maximum sustainable net benefit for people and societies worldwide.

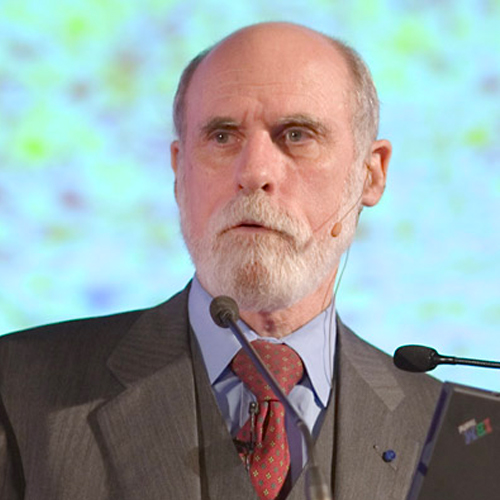

Below is the co-inventor of the Internet Vint Cerf endorsing the I4ADA Summit 2019, explaining the increasing relevance of Accountability in the Digital Age.

2-day conference

The Hague Summit will be a 2-day conference bringing together a global multi-stakeholder community from national and local governments, international policymakers, civil society, NGOs, the ICT industry and platforms, as well as other relevant Organizations and institutes. The delegates’ conclusions and recommendations will contribute to shaping a global path towards a responsible policy. Together our aim is to foster accountability in and for the digital age.

Introducing the speakers

Ursula Owusu-Ekuful

Ursula Owusu-Ekuful Mrs Ursula Owusu-Ekuful is the Minister for Communications of the Republic of Ghana…

Cédric Wachholz

Cédric Wachholz heads UNESCO’s ICT in Education, Science and Culture section, which works also on…

Vint Cerf

Vint Cerf Vinton G. Cerf is vice president and Chief Internet Evangelist for Google. He…

Jeff Bullwinkel

Jeff Bullwinkel Jeff Bullwinkel serves as Microsoft’s Associate General Counsel and Regional Director of Corporate,…

Marija Pejčinovič Burič

Marija Pejčinovič Burič Marija Pejčinović Burič has worked as the 14th Minister of Foreign and…

Stuart Campo

Stuart Campo Stuart Campo is the Team Lead for Data Policy and a Senior Fellow…

Jaroslaw Ponder

Jaroslaw Ponder Mr Jaroslaw Ponder is Head of the ITU Office for Europe at the…

Arthur van der Wees

Arthur van der Wees Arthur van der Wees, LLM studied and obtained his doctorate degree…

Kathalijne Buitenweg

Kathalijne Buitenweg Member of Parliament in the Netherlands in the subcommittees for Justice, Security, Police…

John Higgins CBE

Chair of DIGITALEUROPE’s Brexit Advisory Council John Higgins CBE John Higgins has been the public…

Helen Brown

Helen Brown Helen Brown is Legal Counsel at the Permanent Court of Arbitration (PCA), an…

Mike Hinchey

Mike Hinchey Professor Mike Hinchey is Chair of IEEE UK & Ireland Section for 2018-2019….

Nanjira Sambuli

Panel member of the United Nations Secretary General’s High-Level Panel on Digital Cooperation (2018-19) Nanjira…

Sivaaji De Zoysa

Sivaaji De Zoysa Sivaaji De Zoysa comes from two highly respected and established families (4th…

Freerk Teunnissen

Freerk Teunnissen Freerk Teunissen M.A. (1970) is (psycholinguistic) advisor He analyzed psycholinguistic techniques employed by journalists…

Charles Groenhuijsen

Charles Groenhuijsen Charles Groenhuijsen (1954) has been the US Correspondent for the main public news…

Andrew Taussig

Andrew Taussig Andrew Taussig worked for 30 years in the BBC where he held the…

Urška Umek

Urška Umek Urška Umek is Secretary of the Committee of experts on quality journalism in…

Dr. Nad’a Kovalcikova

Dr. Nad’a Kovalcikova Dr. Nad’a Kovalcikova is a program manager and fellow at the Alliance…

Vincent Evers

Vincent Evers As a serial enterpreneur he started multiple IT companies in networking, document management…

Joelle Casteix

Spokesperson for survivors of child sexual assault Joelle Casteix Joelle Casteix is a leading global…

Oleg Volkosh

Oleg Volkosh President of Media Arts Group holding and head and a partner of Mediaplus…

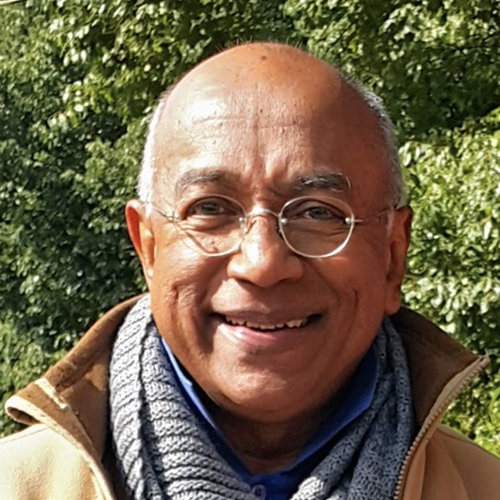

Cyril Pereira

Cyril Pereira Malaysian Cyril Pereira has lived 35 years in Hong Kong. He coaches Data…

Dr Berenice Boutin

Dr Berenice Boutin Dr Berenice Boutin is a researcher in international law at the Asser…

See a full overview of all people speaking at the Hague Summit for Accountability in the Digital age

PROGRAM

Official Program of The Hague Summit for Accountability in the Digital Age

Program for November 5th Tuesday: Day of Arrival

November 5th

| Time | Program | By |

| 18.30 | Drinks | |

| 19.00 | Welcome | Ronald Nomes Director City of The Hague |

| 19.15 | First Course – Dinner | |

| 19.35 | Dinner Speech | Jan Middendorp Dutch Parliament – Initiator Commission Digital Future |

| 20.00 | Second Course – Dinner | |

| 20.45 | Third Course Walking Desert | |

Program for Wednesday, November 6th First Conference Day

November 6th

Coffee & Refresments (8.30 – 9.00)

| Time | Program | By |

| 9.00 | Welcome | Saskia Bruines, Deputy Mayor City of the Hague |

| 9.10 | Introduction | Stuart Campo, UNOCHA, Moderator |

| 9.15 | Keynote | Minister Ursula Owusu-Ekuful Minister of Communications, Ghana; Member of the Broadband Commission |

| 9.30 | Keynote | Cedric Wachholtz Chief of Section ICT’s in Education, Science & Culture, UNESCO |

| 9.45 | Video | Vint Cerf Chief Internet Evangelist, Google |

| 9.50 | Keynote | Jeff Bullwinkel Associate General Counsel and Director of Corporate, External & Legal Affairs, Microsoft Europe |

| 10.05 | Video | Ms Marija Pejčinovič Burič Secretary General Council of Europe |

| 10.10 | Keynote | Jaroslaw Ponder Head European Office, ITU |

coffee break (10.30 – 11.00)

| 11.00 | Panel discussion “Current State of Play” |

| Moderator: Arthur van der Wees, board member I4ADA Panel members: Sivaaji de Zoysa, Managing Director Gaia Investments ltd, Global Chair YPO Impact Networks Council Nanjira Sambuli, Senior Policy Manager, World Wide Web Foundation Prof Mike Hinchey, President International Federation for Information Processing (IFIP) John Higgens, Chairman Global Digital Foundation Helen Brown, Legal Council Permanent Court of Arbitration Vadim Belyakov, Founder Not Alone Kathalijne Buitenweg, Chair Commission Digital Future Dutch Parliament |

lunch (12.30 – 14.00)

| 14.00 | Panel discussion “Accountabillity & (social) media and journalism” |

| Moderator: Freek Teunissen, NICJ Panel members: Andrew Taussig, former Director BBC Nad’a Kovalcikova, Program manager and fellow The German Marshall Fund of the U.S Cyril Pereira, AsiaSentinel Oleg Volkosh, President Mediaplus Group, Chair YPO Europe Charles Groenhuijsen, Dutch Journalist Joelle Casteix, Director Zero Abuse Project Vincent Everts, Trendwatcher Urška Umek, Committee of experts on quality journalism in the digital age Council of Europe |

Tea break (15.30 – 16.00)

| 16.00 | Round table discussion “Accountabillity & (social) media and journalism” |

| The organizers want to capture the collective knowledge of everybody attending the Summit. What are your ideas and solutions for Accountability in the Digital Age? i.e. what instruments – high level, practical, organizational, digital, standards, regulatory, enforcement and the like – are potentially available and feasible and how to organize those in view of Accountability. Therefore, the panel discussions on (Social)Media & Journalism, Artificial Intelligence and Cyber Security & Cyber Peace will each be followed by Round Table Discussions to address the question above. There will be about 10 moderated round tables running simultaneously using the ‘World Café’ format. The World Café methodology is a simple, effective, and flexible format for hosting large group dialogue, that allows delegates to contribute to the discussion directly. For more information, please refer to: www.theworldcafe.com Topics: 1. How to come to evidence-based news feeds and related transparency in the Digital Age? 2. How to professionally and safely work in a cyber-physical world that is more and more ‘smart’? 3. Digital & Journalism in Ghana (case study) – presented by Musah Inuwa, Confluent Media, Ghana 4. Digital & Journalism in Hong Kong (case study) – presented by Cyril Pereira, Hong Kong | |

| 17.30 | Eulogy Dr. Indrajit Banerjee, former Director Knowledge Societies, UNESCO, instigator Summit on Accountability in the Digital Age. Presented by Andrew Taussig |

Reception (17.45 – 18.45)

Program for Thursday November 7th – Second Conference Day

November 7th

Coffee & Refresments (8.30 – 9.00)

| 9.00 | Panel discussion “Accountability & Artificial Intelligence” |

| Moderator: Berenice Boutin, Researcher International Law, Asser Institute Panel members: Peter Batt, Director General,German Federal Ministry of the Interior, Building and Community Stephen Ibaraki, Managing Partner REDDS Capital Investors Prof. Tatjana Welzer Družovec, University of Maribor, Institute of Informatics Irakli Beridze, Director AI and Robotics lab, UNICRI Christina Caljé, CEO & Co-founder Autheos Lukas Roffel, Chief Technology Officer Thales Prof. dr. Holger Hoos, Head CLAIRE Evert Haasdijk, AI Expert, Deloitte Clementina Barbaro, Committee on Artificial Intelligence, Council of Europe Cedric Wachholtz, Chief of Section for ICTs in Education, Science and Culture, UNESCO |

Coffee break (10.30 – 10.45)

| 10.45 | Round table discussion “Accountability & Artificial Intelligence” |

| The organizers want to capture the collective knowledge of everybody attending the Summit. What are your ideas and solutions for Accountability in the Digital Age? i.e. what instruments – high level, practical, organizational, digital, standards, regulatory, enforcement and the like – are potentially available and feasible and how to organize those in view of Accountability. Therefore, the panel discussions on (Social)Media & Journalism, Artificial Intelligence and Cyber Security & Cyber Peace will each be followed by Round Table Discussions to address the question above. There will be about 10 moderated round tables running simultaneously using the ‘World Café’ format. The World Café methodology is a simple, effective, and flexible format for hosting large group dialogue, that allows delegates to contribute to the discussion directly. For more information, please refer to: www.theworldcafe.com Topics: 1. How can AI facilitate Accountability? 2. How can Accountability facilitate AI? 3. AI & Bias: How to address it? 4. International law for AI accountability: the role that (existing) international norms and institutions can play in addressing the accountability challenges raised by AI. 5. AI and criminal responsibility Presented by Marta Bo, Asser Institute |

LUNCH (12.30 – 14.00)

| 14.00 | Panel discussion “Accountability & Cyber Security and Cyber Peace” |

| Moderator: Jacques Kruse-Brandao, co-founder, Charter of Trust Panel members: Chris Painter, Former Coordinator for Cyber Issues US State Department, GCSC Arda Gerkens, Senator Dutch Parliament Judge Chang-ho Chung, International Criminal Court Prabhat Agarwal, dept head of Deputy Head of Unit, Online Platforms & eCommerce European Commission Pavan Duggal, Advocate Supreme Court of India Catherine Garcia-van Hoogstraten, Lecturer & Researcher The Hague University of Applied Sciences Jaroslaw Ponder, Head of Europe Office ITU Paul Timmers, Research fellow Oxford University, Digital Enlightment Forum |

Coffee break (15.30 – 16.00)

| 16.00 | Round table discussion “Accountability & Cyber Security and Cyber Peace” |

| The organizers want to capture the collective knowledge of everybody attending the Summit. What are your ideas and solutions for Accountability in the Digital Age? i.e. what instruments – high level, practical, organizational, digital, standards, regulatory, enforcement and the like – are potentially available and feasible and how to organize those in view of Accountability. Therefore, the panel discussions on (Social)Media & Journalism, Artificial Intelligence and Cyber Security & Cyber Peace will each be followed by Round Table Discussions to address the question above. There will be about 10 moderated round tables running simultaneously using the ‘World Café’ format. The World Café methodology is a simple, effective, and flexible format for hosting large group dialogue, that allows delegates to contribute to the discussion directly. For more information, please refer to: www.theworldcafe.com Topics: 1. How can Cybersecurity facilitate Accountability? 2. How can Accountability facilitate Cybersecurity? 3. AI & Peacekeeping: How to address the upsides and downsides of AI in a Military Context 4. Smart, Secure & Inclusive Society, presented by Bram Reinders, Institute for Future of Living 5. How to leverage the potential of Digital data while being respectful of beneficiaries’ rights? Presented by Joachim Ramakers, Redcross 6. AI and the changing battlefield: will discuss whether, how and to what extent AI reshapes the ways of conducting warfare? Presented by Rebecca Mignot-Mahdavi, Asser Institute 7. AI and Border Control. Presented by Dimitri van den Meerssche, Asser Institute | |

| 17.30 | Wrap up |

CLOSE (17.45)

Accountability in the Digital Age

Panel discussion on Accountability & (Social) Media and Journalism

The notion of checks and balances lies at the heart of most Western democracies. These checks and balances have largely been relevant for the branches of government, to keep in check these large institutions with control over their societies. Less thought, however, has been given to checking private actors, such as tech monopolies with increasing power over our lives. It is crucial to consider how to proceed with regards to the balance of power in a democracy.[i]

Digital media forms have allowed citizens all over the world to connect, and to help each other hold political powers to account. But in recent years, the positive effect of this democratization of information seems to have flipped, as governments and organizations struggle with issues like the spread of ‘fake news’ and propaganda and the safeguarding of citizens’ personal privacy and safety.

In a bid to ensure powerful private actors such as Facebook work to improve in this regard, various methods have been suggested. For example, in April of 2019 a UK white paper indicated that it was time to move on from industry self-regulation and start enforcing standards for content regulation by holding individuals in such companies liable.[ii] Meanwhile, Facebook’s Mark Zuckerberg has said he wants governments to take up a more active role in regulating for the purposes of data use, privacy and election integrity, calling for a “globally harmonized framework.”[iii]

Supporters remain in favor of far-reaching freedom of speech online, while critics argue that “rather than uniting and informing, social media deepens social and political divisions and erodes trust in the democratic process.”[iv] Positive outcomes are visible—facilitating for example social movements or democratic uprisings—, but it is important to consider whether certain platforms or organizations have systemic flaws that pose a threat to the world’s democratic institutions, and whether we have the ways and means to hold them accountable.

Panel discussion on Accountability & Artificial Intelligence

Just in the past few years, the world has seen a sharp spike in attention for the governance of AI. Around twenty countries adopted national AI strategies, alongside more examples such as France and Canada launching an International Panel on AI and the US Defense Innovation Board devising ethical principles for the use of military AI for the Pentagon.[v]

Within AI, there is a difference between Artificial Narrow Intelligence (ANI) and Artificial General Intelligence (AGI). The former refers to machine intelligence equaling or even exceeding human intelligence but only for specific tasks, such as IBM’s chess-playing computer, Google’s AlphaGo, or the robot pilot that recently passed its flying test in the US. AGI concerns machine intelligence that matches human performance across any task.[vi]

At the moment, private actors and companies “are creating AI-based solutions to everything from grading students to assessing immigrants for criminality,” often bound by little more than their own ethical statements.[vii] And this issue does not only prevail in the private sector. As predictive algorithms become normalized in law enforcement, they will most likely also move into the (inter)national security spheres.[viii]

Our legal system is largely geared towards human agents. Think, for example, of how criminal law requires there to be active intent behind actions, which results in a certain punishment. At the same time, however, technology will continue to move towards—and will eventually go beyond—Artificial General Intelligence. Complex systems will increasingly make decisions that are difficult to predict beforehand or explain afterward. One of the biggest issues arisen in the past few years of increasingly autonomous, ‘black box’ decision-making in technology, is therefore who can or should be held responsible if AI causes harm—an issue often referred to as an ‘accountability gap’.

The three main problems that may arise from this accountability gap, if not addressed soon enough, are causality, justice, and compensation.[ix] Firstly, it is hard to make a causal link from harm back to a specific person or organization if the harm was due to a computer decision. Secondly, the legal system’s penalties are mostly geared towards punishing humans (or entities that are made up of humans). Fines or prison sentences are not a deterrent for algorithms, but without effective penalties it is difficult to ensure justice is served. Lastly, and in relation to the former two problems: who is to pay compensation to victims of accidents caused by autonomous AI?

Panel discussion on Accountability & Cyber Security and Cyber Peace

Until fairly recently, most governments saw the digital sphere as separate from physical threats to national safety and sovereignty. However, the coming of cyber-physical systems, complex digital networks and the internet of things, along with more sophisticated, has turned cyberattacks.[x]

Algorithms have rapidly increased in importance for national security, now protecting nations from hack attempted cyberattacks around the clock. The value of these algorithms is their ability to immediately respond to attacks. Therefore, as they become more responsible for not only the digital sphere but also for the interconnected patchwork of critical infrastructure, there must be sufficient and timely consideration of how to assure algorithm ‘behavior’ is predictable and explainable enough that we can trust to base more of our peace and security on them. The more we rely on self-learning systems to protect us, the sooner we must find alternative frameworks for transparency and accountability.[xi]

The difficult process of agenda-setting

and accepting international norms for cybersecurity has been ongoing for a

while, but once norms accountability always remains the next challenge. Aside

from this, the relatively low speed at which countries within international

coalitions or institutions manage to agree upon norms has left many non-state

actors unshielded in the meantime. Many companies have started building their

own agreements to ensure a level of mutual cybersecurity, in the form of both

normative and operational alliances.[xii]

[i] Deeks, Ashley. “Facebook Unbound?” Virginia Law Review Online 105 (February 2019): 1–17.

[ii] Stewart, Heather, and Alex Hern. “Social Media Bosses Could Be Liable for Harmful Content, Leaked UK Plan Reveals.” The Guardian, April 4, 2019, sec. Media. https://www.theguardian.com/technology/2019/apr/04/social-media-bosses-could-be-liable-for-harmful-content-leaked-uk-plan-reveals.

[iii] Press Association. “Mark Zuckerberg Calls for Stronger Regulation of Internet.” The Guardian, March 30, 2019, sec. Media. https://www.theguardian.com/technology/2019/mar/30/mark-zuckerberg-calls-for-stronger-regulation-of-internet.

[iv] Intelligence Squared Debates: Social Media Is Good for Democracy. IQ2US, 2018. https://www.intelligencesquaredus.org/debates/social-media-good-democracy-0.

[v] Simonite, Tom. “Canada, France Plan Global Panel to Study the Effects of AI.” Wired, June 12, 2018. https://www.wired.com/story/canada-france-plan-global-panel-study-ai/; Tucker, Patrick. “Pentagon Seeks a List of Ethical Principles for Using AI in War.” Defense One, January 4, 2019. https://www.defenseone.com/technology/2019/01/pentagon-seeks-list-ethical-principles-using-ai-war/153940/; Zwetsloot, Remco, and Allan Dafoe. “Thinking About Risks From AI: Accidents, Misuse and Structure.” Lawfare, February 11, 2019. https://www.lawfareblog.com/thinking-about-risks-ai-accidents-misuse-and-structure.

[vi] De Spiegeleire, Stephan, Matthijs Maas, and Tim Sweijs. “Artificial Intelligence and the Future of Defense: Strategic Implications for Small- and Medium-Sized Force Providers.” The Hague: The Hague Centre for Strategic Studies, 2017. https://www.hcss.nl/sites/default/files/files/reports/Artificial%20Intelligence%20and%20the%20Future%20of%20Defense.pdf.

[vii] Coldewey, Devin. “AI Desperately Needs Regulation and Public Accountability, Experts Say.” TechCrunch, December 7, 2018. http://social.techcrunch.com/2018/12/07/ai-desperately-needs-regulation-and-public-accountability-experts-say/.

[viii] Deeks, Ashley. “Predicting Enemies.” Virginia Law Review, Virginia Public Law and Legal Theory Research Paper, 104 (2018). https://ssrn.com/abstract=3152385.

[ix] Bartlett, Matt. “Solving the AI Accountability Gap.” Medium, April 5, 2019. https://towardsdatascience.com/solving-the-ai-accountability-gap-dd35698249fe.

[x] Dobrygowski, Daniel. “Why Companies Are Forming Cybersecurity Alliances.” Harvard Business Review, September 11, 2019. https://hbr.org/2019/09/why-companies-are-forming-cybersecurity-alliances.

[xi] Deeks, Ashley, Noam Lubell, and Daragh Murray. “Machine Learning, Artificial Intelligence, and the Use of Force by States.” Journal of National Security Law & Policy, Virginia Public Law and Legal Theory Research Paper, 10 (November 16, 2018). https://papers.ssrn.com/abstract=3285879.

[xii] Dobrygowski, Daniel. “Why Companies Are Forming Cybersecurity Alliances.” Harvard Business Review, September 11, 2019. https://hbr.org/2019/09/why-companies-are-forming-cybersecurity-alliances.

Partners 2019 Summit

The Hague Summit for Accountability in the Digital Age is powered by:

International media Partner

Local media Partners

Join the Institute for Accountability in the Digital Age

Our work is only possible with the continued financial support of donors and partners. If you want to become a supporter of the I4ADA, please contact us.

Institute for Accountability in the Digital Age (I4ADA)

Postal address I4ADA

Lange Voorhout 1

2514 EA The Hague

The Netherlands

E: contact@i4ada.org

P: +31 (0)70 3184840

Contact

Please fill in the form. We’ll contact you shortly.